The kubriX Architecture

kubriX is an IDP distribution, not a monolithic platform, but a curated collection of 15+ CNCF-proven open-source tools, pre-integrated and deployable as one. You get a production-ready platform without months of integration work.

This page explains the architectural decisions that make kubriX work, and why they matter for your team.

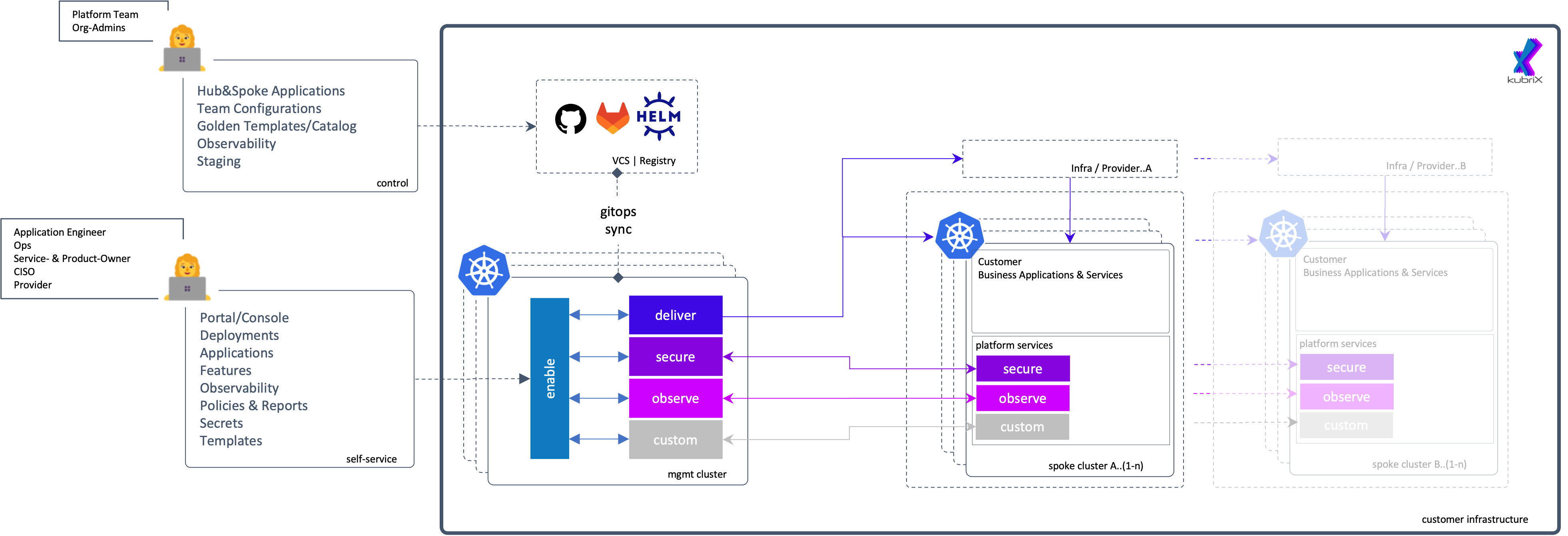

Hub and spoke - one platform, many teams

The kubriX deployment model is built on a hub-and-spoke architecture: a single hub cluster hosts all centralized platform services, while spoke clusters run your application workloads independently.

Hub Cluster

The hub runs all shared platform services:

- GitOps controllers managing all spoke deployments

- Centralized authentication, policy enforcement, and secret management

- Cross-cluster observability aggregation

- Template and configuration repository

Spoke Clusters

Spokes run application workloads with platform capabilities available locally:

- Teams deploy through self-service interfaces - no ops bottleneck

- Security and observability are present in every spoke automatically

- All configurations synchronized from the hub via GitOps

Why this matters

- One place to govern → policies, identity, and secrets managed centrally, not replicated per team

- Full isolation → a failing workload or misconfiguration in one spoke doesn't affect others

- Scales with you → add spokes as your organization grows, without rearchitecting

- Works at any size → equally suited for a single team running dev/staging/prod or dozens of independent product teams

Security by design

Security in kubriX is not a feature you enable, it is the foundation everything else runs on. Every spoke inherits secure defaults automatically. There is nothing to opt into.

| Layer | Tool | What it does |

|---|---|---|

| Identity & SSO | Keycloak | Single sign-on and OIDC across all platform services |

| Policy enforcement | Kyverno | Policy-as-code enforced at admission time - before workloads run |

| Secrets management | OpenBao + External Secrets | No plaintext secrets in Git, ever |

| Audit & compliance | built-in | Every action logged, you are compliance-ready from day one |

This is what "security by design" means in practice: your developers ship into a secure environment by default, and your platform team doesn't have to enforce it manually.

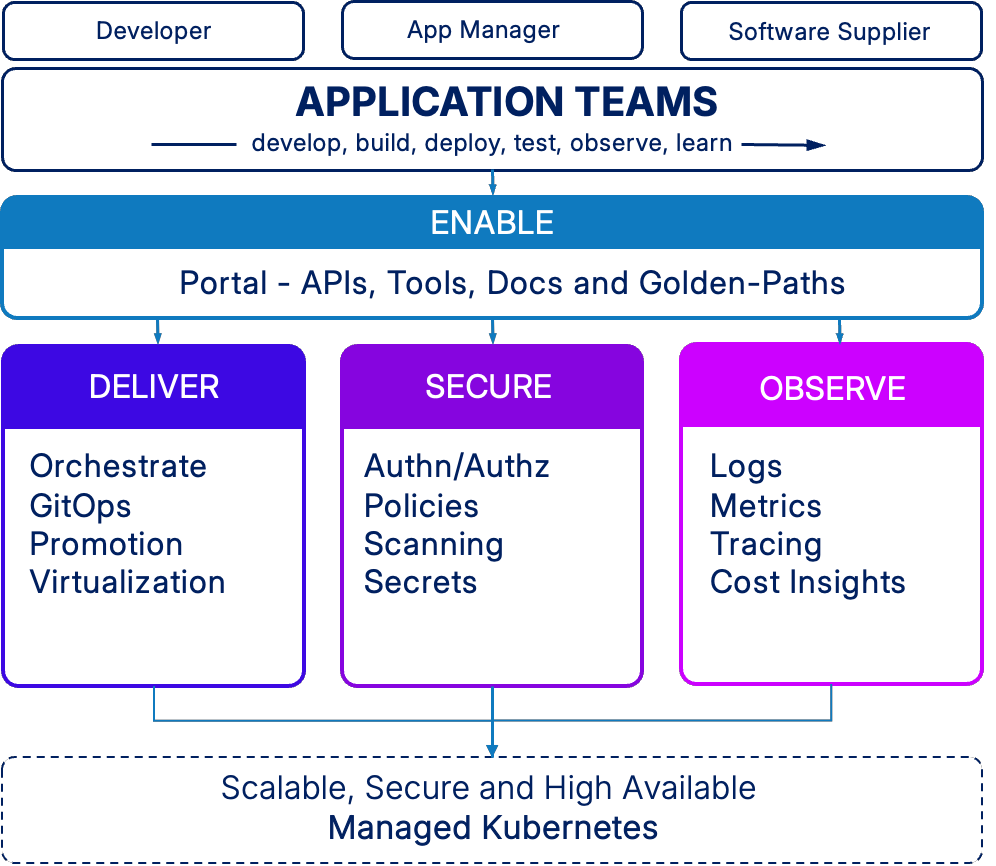

4 core capabilities

kubriX organizes platform functionality into 4 pillars that address the full lifecycle of running Kubernetes at scale:

🧩 Enable - developers ship without waiting

Give development teams the self-service tools they need to move independently:

- Developer Portal (Backstage) → centralized interface for APIs, documentation, and workflows

- Service Catalog → browse and discover available services and templates

- Golden Paths → standardized deployment patterns that encode organizational best practices

- Template System → pre-configured templates for common application types

🚀 Deliver - consistent deployments across every environment

GitOps-based deployment and lifecycle management that removes manual handoffs:

- GitOps Engine (Argo CD) → declarative, Git-driven continuous deployment

- Multi-Stage Promotion (Kargo) → controlled progression from dev to staging to production

- Application Orchestration → automated rollouts, rollbacks, and health checks

- Virtualization Support → run VMs alongside containers when legacy workloads require it

🔐 Secure - built in, not bolted on

Identity, policy, and secrets management that every team benefits from automatically:

- Identity Provider (Keycloak) → centralized authentication and Single Sign-On

- Authorization → RBAC and fine-grained permissions across all services

- Policy Engine (Kyverno) → admission control, compliance validation, and governance

- Secrets Management (OpenBao) → centralized storage with automatic injection into workloads

- Security Scanning → vulnerability detection integrated into deployment pipelines

📊 Observe - full visibility, zero guesswork

Operational visibility and cost tracking across all clusters and teams:

- Log Aggregation → centralized collection and search across all clusters

- Metrics Collection → time-series data for performance monitoring and alerting

- Distributed Tracing → request flow tracking across microservices

- Cost Analytics (KubeCost) → resource usage and cost per team, namespace, or application

- Unified Dashboards (Grafana) → single pane of glass for platform and application health

GitOps - the operating model

All platform and application configurations are managed declaratively through Git:

- Platform team maintains cluster configurations, policies, and templates in Git

- Application teams deploy using approved templates through the portal or Git directly

- ArgoCD detects changes and reconciles desired state across all clusters

- Policy validation and secrets injection happen automatically at deployment time

- Every change is auditable through Git history — full rollback at any point

This ensures consistent configuration across environments and is the backbone of kubriX's reliability.

The component stack

Every tool in kubriX was selected against the same principles:

- Production-proven → used by thousands of companies at scale

- CNCF aligned → part of or aligned with the Cloud Native ecosystem

- Active community → maintained with strong long-term support

- Kubernetes-native → built for Kubernetes, not bolted on

- Replaceable → swap any component without rebuilding the platform

| Capability | Tool |

|---|---|

| Developer portal | Backstage |

| GitOps engine | Argo CD |

| GitOps promotion | Kargo |

| Identity & SSO | Keycloak |

| Secrets | OpenBao + External Secrets Operator |

| Policy | Kyverno |

| Observability | Grafana LGTM stack |

| Cost management | KubeCost |

| Ingress | Traefik |

| Backup & recovery | Velero + UI |

Design principles

These principles guide every decision in kubriX - from architecture to tool selection.

Modular and replaceable

Each component serves a clear purpose and can be swapped when needed.

- Replace individual tools without rebuilding the platform

- Keep existing solutions where they already work

- Stable interfaces ensure long-term flexibility

Kubernetes-native

Everything runs as standard Kubernetes resources - no proprietary layers.

- Works with familiar tools like

kubectland Helm - Uses native APIs and CRDs

- No hidden abstractions or lock-in

GitOps-first

All configuration is declarative, versioned, and driven from Git.

- Cluster state is defined in Git — not applied manually

- Every change is traceable, auditable, and reversible

- Consistent workflows across all environments

Production-tested

Only proven technologies in the critical path.

- Used successfully in real-world production environments

- Backed by active communities

- Designed for long-term reliability and support